Introduction

At a recent EngineeringX dinner in Denver, the conversation around AI in software development quickly turned from hype to reality. One leader mentioned, “I merged a 7,500-line PR yesterday. If a human sent that, I’d close it. But this time, it passed—because our tests were 100%, our architecture guardrails were enforced by agents, and our process caught everything.” That story captures a growing truth in modern engineering: AI is accelerating delivery beyond human review capacity. Teams are now coding at machine speed—but without equal attention to downstream automation, they’re also shipping machine-speed risk. This paradox, fast but fragile, is what both the Harness 2025 State of AI in Software Engineering Report and Google DORA’s 2025 State of AI-Assisted Software Development call out as the defining challenge of our time (Harness, 2025; Google DORA, 2025).

The AI Velocity Paradox

The latest data backs what many of us feel intuitively:

- 63% of organizations say they’re shipping code faster since adopting AI (Harness, 2025).

- Yet 45% of deployments containing AI-generated code introduced problems, and 72% have experienced at least one production incident caused by AI-generated changes (Harness, 2025).

- 70% worry about runaway cloud costs due to inefficient AI-generated code (Harness, 2025).

- And 95% of developers now use AI tools daily, though 30% admit they don’t trust AI outputs without additional validation (Google DORA, 2025).

In short: AI amplifies everything—good engineering or bad. As DORA puts it, AI “acts as a force multiplier for existing systems and processes” (Google DORA, 2025, p. 17). Without automation maturity, that multiplier can just as easily amplify chaos.

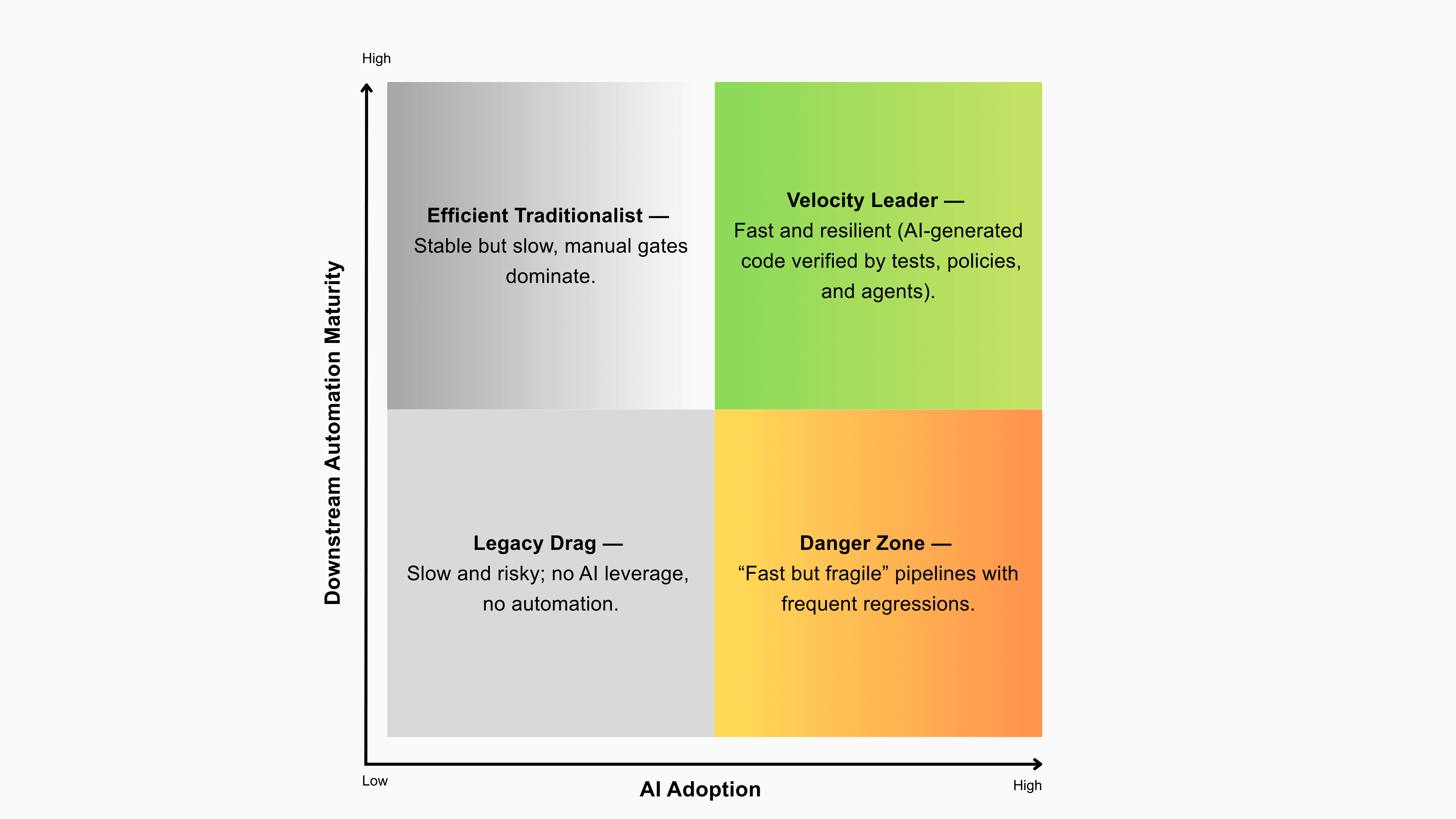

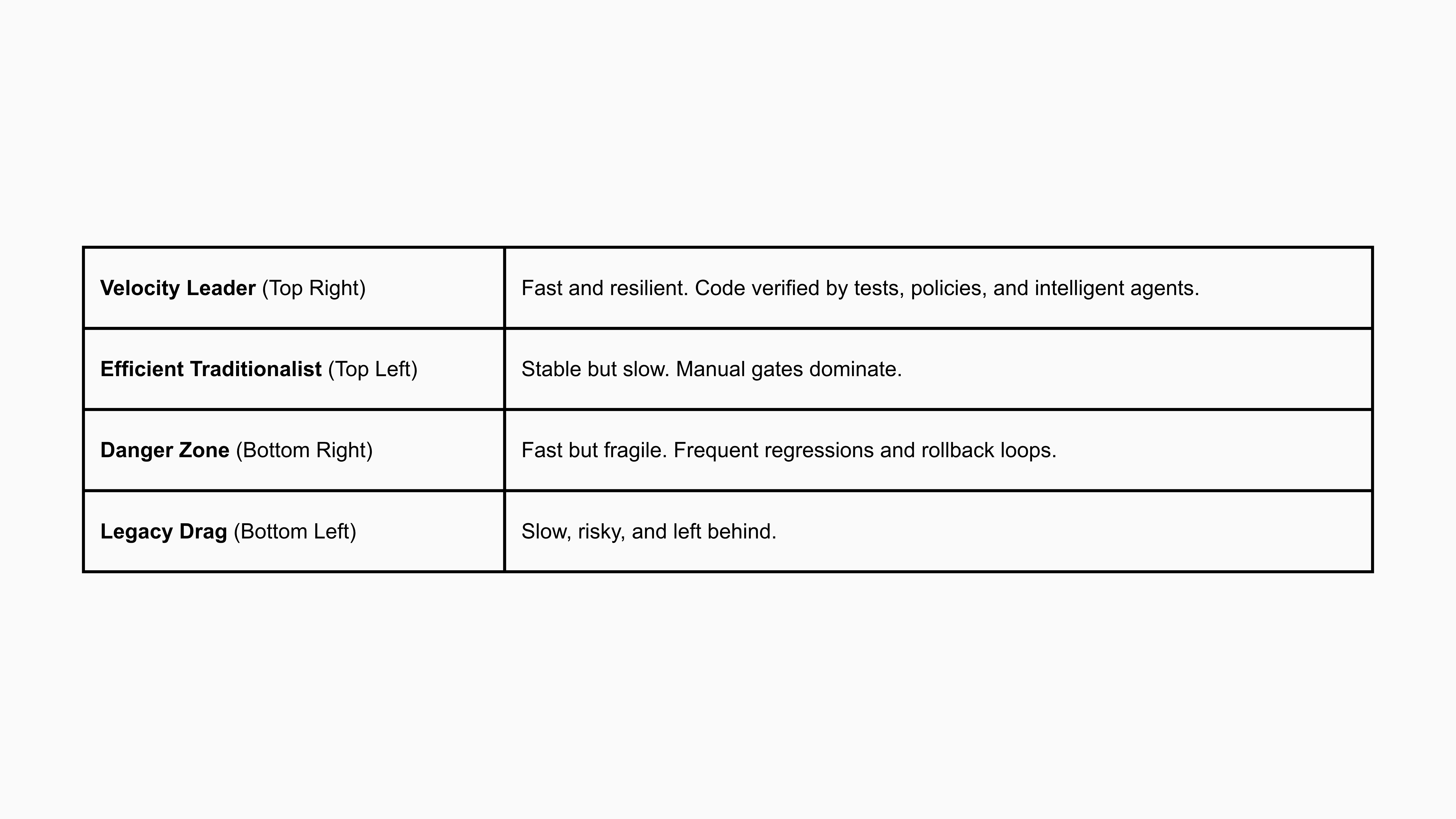

The Quadrant: Where Your Team Sits

To visualize this dynamic, we built the AI Velocity Paradox Quadrant:

Teams that marry high AI adoption with high downstream automation—strong CI/CD policies, comprehensive test coverage, fitness functions, and progressive delivery—graduate into Velocity Leaders. Everyone else risks falling into the Danger Zone.

Lessons from the Denver Table

Across conversations with engineering leaders from Trimble, EchoStar, Ancestry, Charter Communications, Visa, and others, five insights emerged—each reinforced by empirical data from DORA and Harness.

Fitness Functions Beat Gut Feeling

Teams described agent-enforced “fitness functions” in CI/CD—automated checks that ensure every PR adheres to architectural, test, and resilience standards. These aren’t vanity gates; they are machine-speed safety nets. Harness data shows top-performing teams invest heavily in these automated checks, enforcing test presence, policy compliance, and feature-flag validation before merging (Harness, 2025). “Trust the process, not the model.” — EngineeringX Dinner Attendee, Denver

Context Is the New Compiler

When developers fed AI richer context, architecture docs, service maps, shared components, the quality of generated code and tests improved dramatically. This aligns with DORA’s observation that context-driven engineering (platform documentation, reusable patterns, and defined standards) correlates directly with stability and reliability (Google DORA, 2025). AI without context behaves like a junior engineer guessing in the dark. Context turns it into a partner.

Tool Choice Matters Less Than Process

From Amazon Q to Cursor to CrewAI, developers agreed: it’s not the model—it’s the maturity of the process. Harness found organizations using multiple copilots, often 8–10 tools, without unified governance (Harness, 2025). That fragmentation results in duplication, inconsistent policies, and missed lessons. Streamlining and standardizing prompt patterns and agent governance outperformed “more tools every quarter.”

Feature Flags Save You (and Your Weekend)

The dinner’s most relatable moment came when someone admitted: “We almost bankrupted a product by rolling out an AI feature with no cost or flag control.” Harness data shows progressive delivery, feature flags, canaries, and gradual rollouts, cuts incident frequency by nearly half in AI-driven pipelines (Harness, 2025). When code is generated at machine speed, deployment control becomes your last line of defense.

Cost Is the New Quality Metric

AI-generated code can look perfect and still ruin your budget. With 70% of organizations citing cloud cost overruns due to unoptimized AI code (Harness, 2025), teams are starting to add “cost fitness functions”, build-breaking checks that flag inefficient queries, loops, or caching violations. Velocity without fiscal discipline isn’t velocity, it’s debt deferred.

From Paradox to Leadership: A 30–60 Day Blueprint

Here’s a pragmatic playbook based on both research and lived experience:

Weeks 1–2: Map and Measure

- Audit your delivery flow for manual gates and low-automation bottlenecks.

- Apply value stream mapping to identify gaps between code generation and code validation.

Weeks 3–4: Encode Fitness Functions

- Automate architectural boundaries, test standards, and policy enforcement in CI/CD.

- Add security, cost, and resiliency checks as part of the build process.

Weeks 5–6: Close the Loop

- Instrument post-deploy verification, synthetic tests, error budgets, service health metrics.

- Collapse redundant AI tools into a unified framework.

- Track “time-to-rollback” and publish it; visibility builds trust.

The DORA - Harness Consensus

Both studies point to a converging truth: Elite teams no longer choose between speed and stability. They automate both.

DORA’s top clusters (6 and 7) achieved high deployment frequency and low failure rates (Google DORA, 2025). Harness calls these organizations “Velocity Leaders”, teams that encode intelligence across the entire delivery lifecycle (Harness, 2025).

Closing Thoughts

The AI revolution isn’t about writing code faster, it’s about trusting that code faster. The future belongs to teams that make machine-speed code safe, verifiable, and economically sustainable. Engineering excellence is no longer defined by human output—it’s defined by the intelligence of your automation.

References

Google DORA. (2025). 2025 State of AI-Assisted Software Development. Retrieved from https://dora.dev/research/2025/dora-report/

Harness. (2025). The State of AI in Software Engineering 2025. Retrieved from https://www.harness.io/the-state-of-ai-in-software-engineering